The Internet of Things (IoT) is all about data: big data in core data centers where millions of sensor inputs are aggregated, with trends identified and actionable insights delivered, while fast data pulses through thousands of individual edge gateways to deliver microsecond processing that is acted upon in real time. IoT devices (autonomous cars, drones, robots, machines, equipment, surveillance cameras and so forth) will continue to increase the data they generate annually, as forecasts indicate.

For example, a self-driving car can generate approximately three gigabytes of data per second from a variety of sensors—if the average car is driven about 300 hours per year, a single vehicle could generate about 72 gigabytes per day and about 26 terabytes of data annually. Processing data on this scale at IoT speed requires a modern storage interface, such as NVMe.

The Non-Volatile Memory Express (NVMe) standard, an interconnect protocol used for accessing high-speed storage media, is designed to connect high-performance NAND flash memory to compute resources over native PCI Express (PCIe) links. Its streamlined memory interface, command set and queue design eliminate the legacy SCSI command stack and direct-attached storage (DAS) bottlenecks associated with traditional hard drive interfaces, resulting in a uniquely tuned input-output (I/O) architecture optimized for solid state media.

NVMe connects directly to the CPU via a PCIe bus, minimizing the storage driver stack to deliver significantly faster performance than traditional interfaces. As such, it is well suited for aggregating diverse data sources from IoT workloads, including from thousands of sensors streaming data at a rate of thousands of times per second. The advanced performance of NVMe makes it essential for aggregating diverse IoT data streams into databases, while providing enough bandwidth and IOPS to also perform analytics.

The new generation of NVMe-compliant SSDs are enabling more responsive applications while reducing the number of overall storage devices required, as well as associated physical footprints. As a result, NVMe SSDs are accelerating business opportunities in all areas of the data center and at the edge.

Built for Fast Data, Edge Gateway Processing

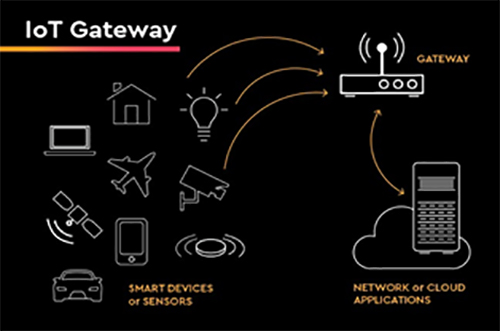

IoT devices are not always connected at the edge or with other devices, and may require gateways for connectivity. A gateway serves as an aggregation point at which captured data can also be analyzed. The aggregated data is processed at the edge from a myriad of local sensors that provide two main functions for the IoT apps in question: autonomous response and aggregation. By responding quickly to easy-to-handle events, in addition to filtering a deluge of data into only interesting bits, these gateways reduce the data traffic pressure on both the backhaul communications infrastructure as well as on core data processing.

Similar to the sensors with which they communicate, edge gateways are available in a variety of classes to match specific user needs. The smallest edge gateways contain an embedded-class system-on-a-chip (SoC) processor that utilizes such flash interfaces as UFS or e.MMC for storage. At the higher end are edge gateways with server-class CPUs, gigabytes of capacity and full NVMe compliance. Regardless of the class of gateway deployed, those units supported by NVMe accelerate data processing in two ways: by reducing I/O latency or by increasing I/O parallelism.

NVMe reduces I/O latency through its direct CPU connection so that compliant SSDs can speak directly to the processor without a storage controller chip or protocol conversion that slows overall performance. As a result, I/O latencies can potentially be reduced to the low tens of microseconds versus more than a hundred microseconds for many SATA or SAS SSD deployments.

NVMe can also increase I/O parallelism, by which millions of sensor inputs are aggregated. In these scenarios, data aggregation driven by an edge gateway can involve many small parallel I/O operations. Though individual sensor reports are typically small, there can be hundreds to thousands of these reports delivered every second, requiring a very high I/O queue depth to achieve good performance. While SATA- and SAS-based SSDs are limited to a maximum of 32 or 256 outstanding I/O operations at any time, NVMe-based SSDs can support up to 64,000 queues, each of which may contain hundreds of I/O operations. Though individual NVMe SSDs will vary by the number of queues that are actually supported, in general, they provide far higher parallelism versus what SATA or SAS SSDs can deliver.

Built for Big Data, Core Processing

Data that is transmitted from hundreds or thousands of edge gateways are converted into knowledge and actions by performing core processing in the cloud. Whereas an edge gateway may handle a single factory floor or city block, a centralized processing core is built to handle an entire company’s set of factories or an entire civil infrastructure. Aggregating and processing this type of big data involves tools such as Apache Spark and Apache Hadoop that take clusters of servers and meld them into a single unified application stack.

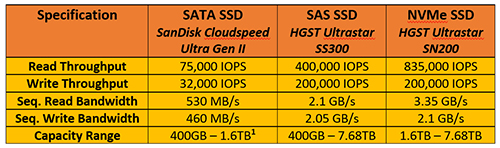

To support the high aggregate number of data streams from edge gateways requires a significant amount of storage bandwidth and performance stability. Not only does the storage need the ability to support 24-7 ingest of new data, but it also needs to have enough extra bandwidth and I/O capacity to handle batch and ad hoc queries against this data. A single high-performance U.2 form factor NVMe SSD (with x4 PCIe data lanes) can provide more than three gigabytes per second of throughput (Table 1). To match this bandwidth, five or more additional SATA SSDs would be required, given bandwidth performance of about 600 megabytes per second.

Processing big data generally involves streaming large block of historical data, small blocks of random read and write data used for intermediate processing, and large blocks that write the results. The same low latency and high queue depth features that make NVMe a good fit for edge gateways are just as beneficial when processing data at the core, and enable the cluster-computing tools used to process and act on streamed data with added capabilities.

Final Thoughts

The Internet of Things is driven by data. Edge gateways that process fast data need the low latency and high parallelism of NVMe SSDs to manage the massive amounts of aggregated sensor data they collect. At the core of the data center, the incredibly fast bandwidth advantages and high queue depths of NVMe SSDs enable faster and more efficient big data analysis.

Initially reserved for high-performance, high-capacity workloads, NVMe SSDs are creating a convergence of compute and storage, and are penetrating other areas of the data center previously reserved for legacy-based SSDs. With more than three gigabytes per second throughput across hundreds of commands and dozens of processing cores, NVMe delivers memory speed between application, CPU and SSD. Not only does this lower latencies and deliver multiple times the performance of SCSI, but it also keeps those new CPUs busy, maximizing your ROI. NVMe is a game changer—at the edge, in the core, and virtually across all computer architectures, today and tomorrow.

Ulrich Hansen is focused on product planning, product line management and technical marketing for Western Digital‘s enterprise SSD product portfolio. This includes defining the company’s next-generation solid-state products, while ensuring that new products and technologies are successfully introduced into the enterprise and data-center markets. He is also responsible for assessing market opportunities and emerging technologies, defining requirements for new products, and aligning customers and industry partners with Western Digital’s product and technology strategies. Mr. Hansen has more than 20 years of experience in a number of high-technology sectors, including servers, storage, and network and communication systems. Prior to joining Western Digital through the HGST-acquisition, he served as the senior director of marketing for Entorian Technologies, and has held senior positions in product development, marketing and corporate strategy, with management consultancies and technology companies that include A.T. Kearney and Dell. Mr. Hansen holds a Master’s degree in business administration from the University of Texas at Austin, and a Master’s degree in electrical engineering from RWTH Aachen in Germany.