RFID Journal LIVE! 2023 will feature end-user companies discussing RFID’s use in various industries, as well as exhibitors offering tagging solutions for multiple applications. To learn more, visit the event’s website.

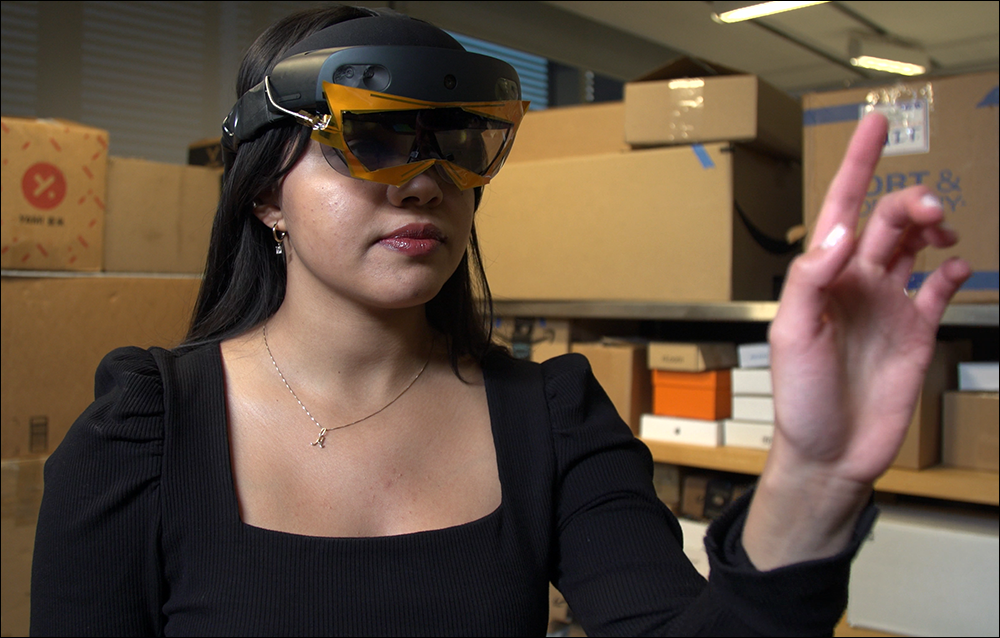

Researchers at the Massachusetts Institute of Technology (MIT) have developed a set of augmented reality (AR) glasses with a built-in RFID reader and antenna to help users identify items within their vicinity, based on RFID tag reads and camera-based sensors. The X-AR augmented reality headset’s wideband antenna is designed to conform to the shape of Microsoft‘s HoloLens visor. An algorithm using natural human mobility helps to localize RFID-tagged objects based on a user’s location, and an RF visual verification tool confirms whether an individual is picking up the right items by comparing hand movements with the tag’s location.

After developing and testing the headset, the researchers say the system can locate tags within 9.8 centimeters (3.9 inches) using a wideband functionality to enhance the 900 MHz ultrahigh-frequency (UHF) transmission. It can determine if a user is picking up the correct RFID-tagged object at 95 percent accuracy, the team reports. The solution was developed to enable an x-ray-style ability for users wearing the AR headsets to view the locations of items that cannot be seen directly.

AR tools are already in use in industrial, healthcare and other work environments to improve accuracy and efficiency, but these come with limitations in terms of identifying objects around a person’s space. “A lot of devices can perceive the environment,” using technologies like optical sensors, “but they are missing access to items that may fall outside of the line of sight,” says MIT researcher Tara Boroushaki.

To extend that perception, the group decided to build a solution employing RFID, based on its ability to wirelessly energize and receive responses from tagged items, even when they are located behind walls or other objects, or inside sealed boxes. “We wanted a system [by] which we could perceive tagged items with an AR headset,” Boroushaki says, “and also identify specifically where they are.” The solution is based on HoloLens technology, utilizing an AR and computer-vision function on a headset to create a 3D map of where an individual moves through a space, providing contextual data related to their location.

Customized Antenna and Reader

What makes this new headset different, the team explains, is that they added an RFID antenna, enabling the headset to offer two-way communication with RFID tags. The antenna wraps directly around the HoloLens glasses, without blocking its cameras. “When it came to the reader and antenna, it not only had to accommodate the existing cameras, but also needed to be lightweight,” says MIT researcher Aline Eid, since the headset is being worn by individuals moving around a space, such as a warehouse, picking and packing goods. Additionally, she says, “We wanted something flexible and transparent.”

Tara Boroushaki

The system’s wideband reading functionality improves tag location granularity, while staying within the technical requirement of the UHF RFID bandwidth. The design leverages a specialized reader, as well as integrated slots in the antenna, on the top and bottom lines around the nose, thereby extending the transmission’s bandwidth with a gain of about 200 MHz. The system captures a combination of time-of-flight and angle-of-arrival data, and the location data is then computed with a user’s own motion.

“We realized that a lot of our users are going to be moving through the environment,” Eid explains, such as walking through the warehouse to the retail store. “By combining all of these different measurements, we were able to get this really fine-grained localization.” The system works with commercial off-the-shelf tags, Eid notes, so the system can operate with any UHF RFID tags within the environment.

The data can be collected passively as workers go about their jobs, or it can be leveraged actively as employees specifically seek tagged items with the device. Individuals might walk up and down aisles, capturing data regarding items within range of the headset and associating their location with the headset’s location. That information can then be saved to a server, as well as on the headset.

Detecting Objects in Warehouses

To further locate tags on a 3D map for an environment in which users are typically moving, the system employs synthetic aperture radar, which is similar to technology used on aircraft for mapping the Earth’s surface by sending radar signals as users move along a specific path. “Then we take this wideband RFID measurement, and we combine it using synthetic aperture radar,” says MIT researcher Laura Dodds, “and you’re able to locate any object in the environment that replies back to us.”

Aline Eid

A real-world example could be a warehouse in which workers use the headset while going about their tasks collecting, moving or locating products. As they move about the site, Dodds explains, the headset reads tags within the vicinity, identifies their locations and stores that data. If an individual is seeking a particular item, they can use the headset to select a product by its description or ID number, then prompt the headset to search for the corresponding RFID tag’s ID.

The system identifies where the user is located. It can provide wayfinding to the tag’s last known location, as well as capture tag reads within the headset’s vicinity, in order to help the user pinpoint that item. If multiple individuals have headsets in use, the data can be collected on a server and then be shared. For instance, if an order arrives for a specific product, the system can identify where that tagged item is, and the closest worker in its vicinity will be alerted to pick it up.

The solution is designed to help employees seek specific tagged goods, according to MIT researcher Maisy Lam. “If you have a database of all the items in the warehouse,” she explains, “the user is able to pick which item or RFID tag they want to find, and then as they walk naturally, we’re taking measurements.” From there, she says, the data is continually updated, and the headset can direct the user toward the point of reference—namely, the tagged object they seek.

Enabling In-Hand Verification

The system uses in-hand verification technology to help users confirm that they have picked up the right items, using a combination of RFID tag localization and the movement of the users’ own hands. The headset detects whether or not a tag identified for picking is actually moving, and it matches that movement with an individual’s perceived hand movement. If no tag movement is identified, even as the hand is moving, the system can indicate that the user hasn’t picked up the correct product.

Laura Dodds

In that way, the technology can help the system affirm to users that they have the right item in their hands. This function can help workers ensure they have picked up the products they were seeking, as well as prevent errors if two items look very similar, such as two sizes or colors of the same shirt. In addition, if an employee is trying to locate an item that may be in a closed box, they can pick up a box and the system will identify whether the proper RFID tag is present. If not, that means they have the wrong box.

So far, Boroushaki says, “We’ve been testing it in a warehouse-type environment. We think that’s the first target scenario that we envisioned this would be deployed in.” In the long term, however, the researchers expect the technology to benefit users in a wide variety of applications, including at stores or manufacturing sites. Eventually, consumers could use the technology in their homes to search for everyday objects, like lost keys.

Maisy Lam

Additional use cases for the technology include inventory or asset tracking at hospitals or pharmacies. The team envisions the solution being used for mechanical tasks as well, such as an engineer or mechanic putting together a complex engine comprising a large number of unique parts. “Someone could work with the AR headset,” Boroushaki notes, and view which part or tool they were picking up with each step.

Moving forward, the team intends to continue working on enabling the devices to integrate with each other and a back-end server, for such applications as inventory management in large warehouses. “We’re really excited to see where this leads us in the future,” Boroushaki states. Data was being sent to an edge server for processing with the initial system that the team tested in the university lab, Lam says. However, she adds, data processing can be accomplished in the headset or in the cloud, “whatever is most relevant for the specific application.”

While the system was initially designed with an antenna that focuses its radiation forward to communicate with the environment in front of the wearer, it could be configured to capture transmissions in other directions. The headset reader antenna accomplishes a transmission range of 3 to 4 meters (9.8 to 13.1 feet), while longer ranges would be possible if the solution only identified tags within the vicinity, as opposed to using localization with wideband features. “I think our next immediate steps,” Boroushaki says, “probably would just be working on some of the challenges, including extending the range, maybe improving our processing techniques [and] making things faster.”

Key Takeaways:

- The X-AR headset reads RFID tags, measuring their movements and locations, as well as the individual wearing the headset, so that tagged goods can be more easily located and identified.

- The MIT researchers will continue to enhance the product’s capabilities, while considering commercialization options.